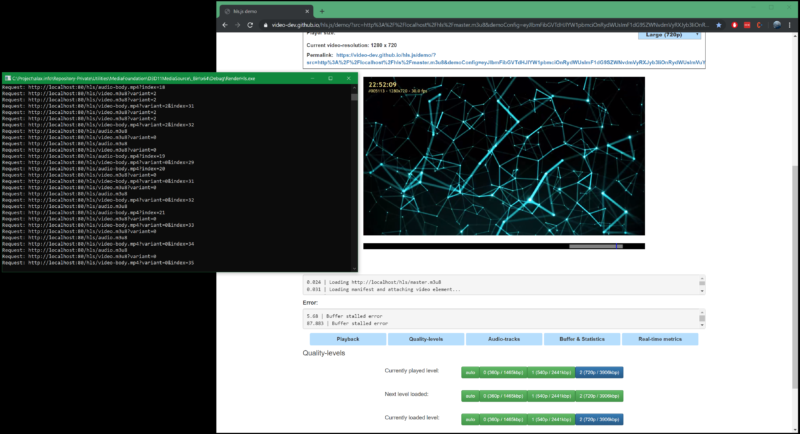

Further experiments with Direct3D 11 shadertoy rendering: HTTP Server API integration and serving on demand parts of HTTP Live Streaming (HLS) asset using Media Foundation with hardware video encoding. An hls.js player is capable to read and play the content, including being able to step between quality levels.

A sort of a Google Stadia for shadertoys with video on demand and possibly low latency. Standard HLS low latency (I am not following latest HTTP/2 extensions for lower latency HLS) is of course not even near the real ultra-low latency that we have in Rainway for web based game streaming being at levels of as low as 10-20 milliseconds with HTML5 delivery, however the approach proves that it is possible to deliver content with on demand rendering.

Perhaps it is possible to use the approach to broadcast live content with server side GPU based post processing. With a single viewer it is easy to change quality levels because a client would request new segment without also downloading it in another quality. Since consumer grade H.264/H.265 encoders are not normally designed to encode much faster than realtime (1920×1080@100 for H.264 is something to align expectations with, perhaps with only higher end NVIDIA cards offering more), quality change can be handled easy, but doing several qualities at a time might be excessive load.

Simplicity of HLS syntax overall allows to format the virtual asset in a flexible way: it can be a true live asset, or it can be a static fixed length seek-enabled asset with on demand rendering from randomly accessed point.

I would also like to use this opportunity to mention another beautiful shader “The Universe Within” by Martijn “BigWings” Steinrucken, which is running on my screenshot.