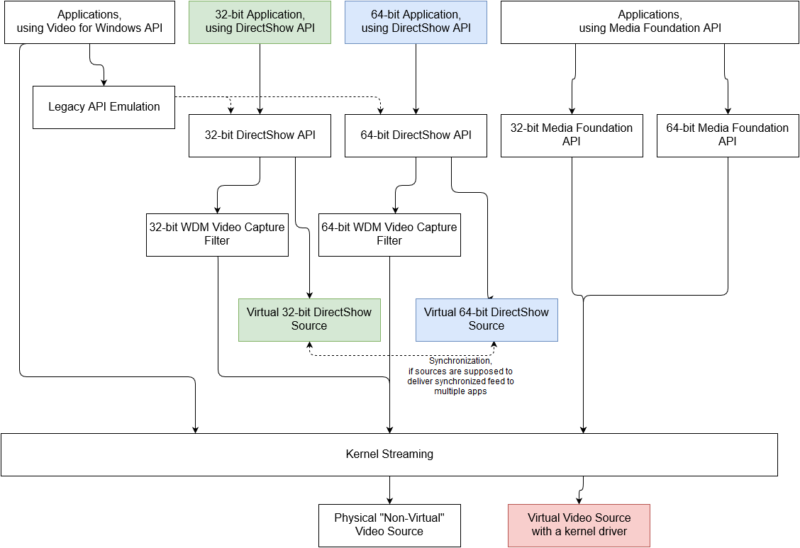

The application takes advantage of three powerful Windows APIs at a time:

MediaFoundationDesktopRecorder initializes a desktop duplication session and sends obtained desktop images to H.264 video encoder producing a standard MP4 recording. Optionally, it can add an audio track capturing data from one of the standard inputs.

The best performance is achieved when used with hardware H.264 encoder: not only the performance of hardware encoder is better, but additionally desktop images are transferred to the encoder efficiently, without being copied through system memory. With respective hardware, recording is pretty efficient.

There are certain limitations: duplication API is Windows 8+, encoder availability depends on hardware and OS versions. The application let API pick encoder automatically and in worth case scenario falls back to software encoder, which is typically a performance hit.

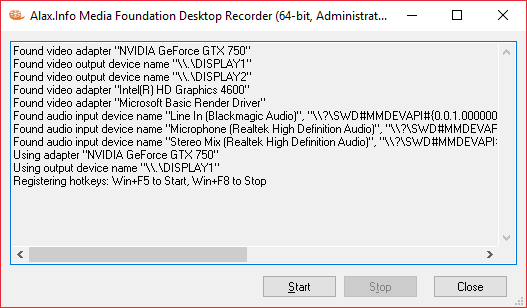

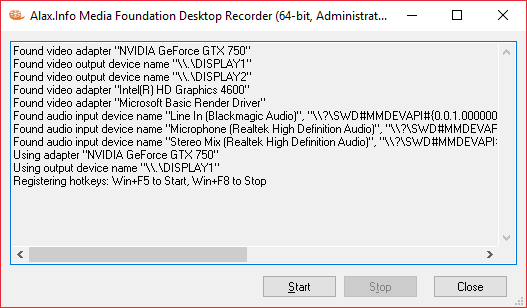

When started, the application prints initial information, esp. regarding availability of devices, and appends as actions and events take place.

The application uses configuration file with the same name and location as the application, and .INI extension. Changes to the configuration file take effect when the application is restarted.

The application registers Win+F5, Win+F8 hotkeys globally to start/stop recording when the application is in background (that is, when user interacts with another application).

The application generates .MP4 files in the directory of its own location. There will be a video track, and optionally one additional audio track – depending on settings. Video is taken from one of the monitors, and audio – from one of the available standard audio input devices.

The application also generates log files at one the locations:

- C:\ProgramData\MediaFoundationDesktopRecorder.log

- C:\Users\$(UserName)\AppData\Local\MediaFoundationDesktopRecorder.log (in case the first path above is inaccessible, esp. due to insufficient permissions)

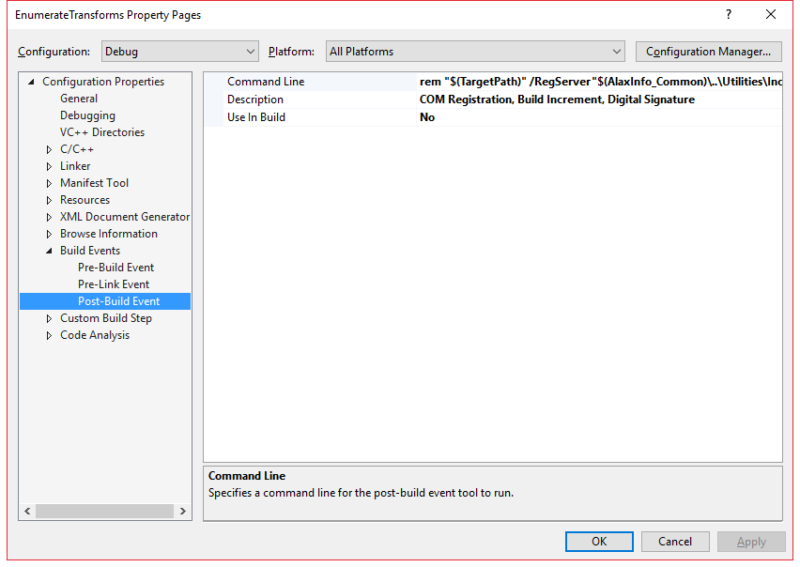

Configuration

The configuration .INI file might contain a few settings that set up and alter the behavoir of the application:

[Input]

;Video Adapter Description=NVIDIA GeForce GTX 750

Video Output Device Name=\\.\DISPLAY2

;Audio Friendly Name=Stereo Mix (Realtek High Definition Audio)

When started, the application enumerates (“found video…”, “found audio…”) available video and audio inputs. These discoveries are compared against configuration file settings in order to identify monitor for recording, and possibly audio input device.

Default behavior is to take first available monitor, which happens when settings do not instruct otherwise. By default, no audio is recorded. Audio is recorded and added to resulting file if input device is provided explicitly.

The application also prints which devices are taken for further recording (“using adapter…”).

[Format]

;Video Frame Rate=30000

;Video Frame Rate Denominator=1001

Video Bitrate=4096000

Video Texture Pool Capacity=24

Video Throttle=70

Audio Bitrate=192000

Default behavior is to identify monitor’s refresh rate and produce output file with video at the same frame rate. Video Frame Rate and Video Frame Rate Denominator settings offer an override to target file frame rate. With the former value only, it is the frame rate. With both values they define a ratio, e.g. values of 30000 and 1001 result in 29.97 fps file.

Frame rate reduction is a good way to reduce encoding complexity and overall graphics subsystem load.

Bitrate values define respective bitrates for the encoded content.

Details

As recording goes, the application grabs new desktop snapshots and sends them to encoder. There are no specific expectations about frame rate stability and reduction in case of overload of graphics subsystem. When the complexity is excessive, it is expected that some frames might be lost without breaking the entire playability of the output file.

The application provides additional information when it creates a file, for example:

Using Direct3D 11 at feature level D3D_FEATURE_LEVEL_11_0

Using Desktop Duplication mode: Resolution 1680 x 1050, Refresh Rate 59954/1000, Format DXGI_FORMAT_B8G8R8A8_UNORM

Using path “D:\Projects\...\Output\20160707-070707.mp4â€

Using video transform Direct3D 11 Aware, Category MFT_CATEGORY_VIDEO_PROCESSOR, Input MFVideoFormat_ARGB32, Output MFVideoFormat_NV12

Using video transform NVIDIA H.264 Encoder MFT, Direct3D 11 Aware, Category MFT_CATEGORY_VIDEO_ENCODER, Input MFVideoFormat_NV12, Output MFVideoFormat_H264

Started writing…

PPP frames written (QQQ frame timeouts, RRR early frame skips, SSS late frame skips)

Stopped writing

Output file size is TTT bytes

When started the application might experience a condition when certain hardware resource is no longer available, e.g. the desktop itself is locked by user. The application will close the file, and attempt to automatically restart recording into new file. The attempts keep going until user explicitly stops recording.

The application does NOT do the following (among things it could):

- the application is limited to record from one monitor only; to record from two at a time it is possible to start several instances however the produced result will not be synchronized

- the application does not provide options to record single window image, to cut a section of monitor image or to scale image down

- the application does not offer choices for video encoders (e.g. there are two or more hardware H.264 encoders), it will always use encoder picked by the system

- the application only offers bitrate setting for video encoding

- the application does not provide flexibility in audio encoding settings, it also expects that audio device is available throughout the entire recording session (esp. is not unplugged as recording goes)

References (Informational)

Download links

- Binaries:

- Source code:

- There is no source code available to public for this application, sorry for this.